Precision is not an accident.

It is the result of the Logic Lab.

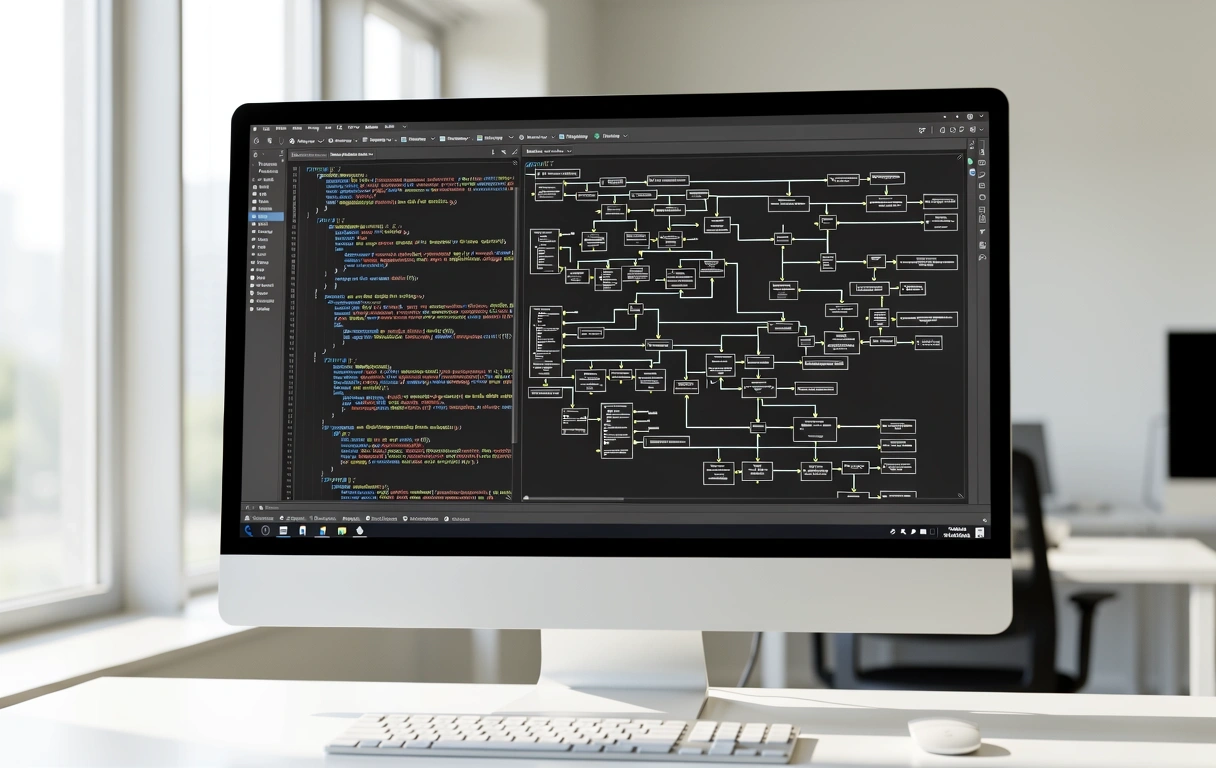

At SteppeLogicLabs, we move beyond standard data processing. Our methodology is a rigorous verification cycle designed to turn raw enterprise information into high-fidelity decision assets for the Australian market.

The Four-Stage

Verification Cycle.

Integrity Baseline Assessment

Before a single line of code is written, we stress-test the source material. We identify silos, latency issues, and ingestion gaps that often plague large-scale Australian enterprise systems. By establishing a clean baseline, we ensure the logic labs environment operates on facts, not assumptions.

Functional Mapping

We translate business goals into mathematical requirements. This involves building the structural scaffolding where your analytics will reside. We don't just connect APIs; we map the logical flow of information to ensure that output remains consistent even as your data volume scales.

Recursive Testing Models

Every model undergoes a recursive verification process. We run edge-case simulations and historical back-testing to verify that the logic holds up under pressure. This stage is where we break things in a controlled environment so they never break in your production suite.

Continuous Logic Refinement

Deployment is the beginning, not the end. We monitor the "Logic Drift"—the natural gap that emerges as market conditions change. Using real-time telemetry, we refine the algorithms to maintain high accuracy and relevance for your specific industry sector.

Our Sydney-based operations leverage logic-driven infrastructure to provide local enterprises with low-latency, high-security data solutions.

"Data is abundant; logic is rare."

Many consultancies focus on the visual "wow" factor of dashboards. At SteppeLogicLabs, we believe a beautiful chart built on flawed logic is a liability. Our methodology prioritizes the unseen plumbing: the data pipelines, the validation scripts, and the logical constraints.

By focusing on the **Logic Lab** approach, we eliminate the noise. We acknowledge that variables change—interest rates, consumer behavior, and global supply chains are rarely static. Our systems are built to adapt to these variables rather than ignoring them in favor of a simpler, less accurate model.

Recognising the Limits of Analytics

A responsible methodology requires an admission of limits. We do not promise absolute clairvoyance. Instead, we provide probabilistic clarity. We build models that define their own margin of error, ensuring you understand the confidence level behind every automated recommendation.

Noise Mitigation

Identifying and filtering out outliers that skew long-term enterprise strategy.

Bias Correction

Auditing algorithmic inputs to prevent inherited systemic biases in software.

Technical Debt

Refactoring legacy data structures to support modern logic requirements.

Ready to apply the Logic Lab to your operations?

Documentation and theory only go so far. Let's discuss how our methodology can be tailored to your specific enterprise data landscape.

Methodology Freshness

Last updated: March 2026. Reviewed for Australian enterprise compliance standards.